A robust three-stage approach to large-scale urban scene recognition

Wang, Jinglu; Lu, Yonghua; Liu, Jingbo; Quan, Long

Sci China Inf Sci, 2017, 60(10): 103101

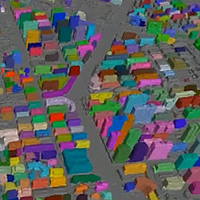

To obtain the ultimate high-level description of urban scenes, we propose a three-stage approach to recognizing the 3D reconstructed scene with efficient representations. First, we develop a joint semantic labeling method to obtain a semantic labeling of the triangular mesh-based representation by exploiting both image features and geometric features. The labeling is formulated over a conditional random field (CRF) that incorporates local spacial smoothness and multi-view consistency. Then, based on the labeled reconstructed meshes, we refine the man-made object segmentation in the recomposed global orthographic map with a graph partition algorithm, and propagate the coherent segmentation to the entire 3D meshes. Finally, we propose to generate a compact, abstracted geometric representation for each man-made object which is more visually appealing than the original cluttered models. This abstraction algorithm also leverages CRF formation to partition building footprints into minimal sets of structural linear features which are then used to construct profiles for large-scale scenes. The proposed recognition approach is able to robustly handle reconstructions with poor geometry and connectivity, thanks to the higher order CRF formulations which impose the ubiquitous regularity priors in urban scenes. Each stage performs an individual and uncoupling task. The intensive experiments have demonstrated the superior performance of our approach in robustness, accuracy and applicability.